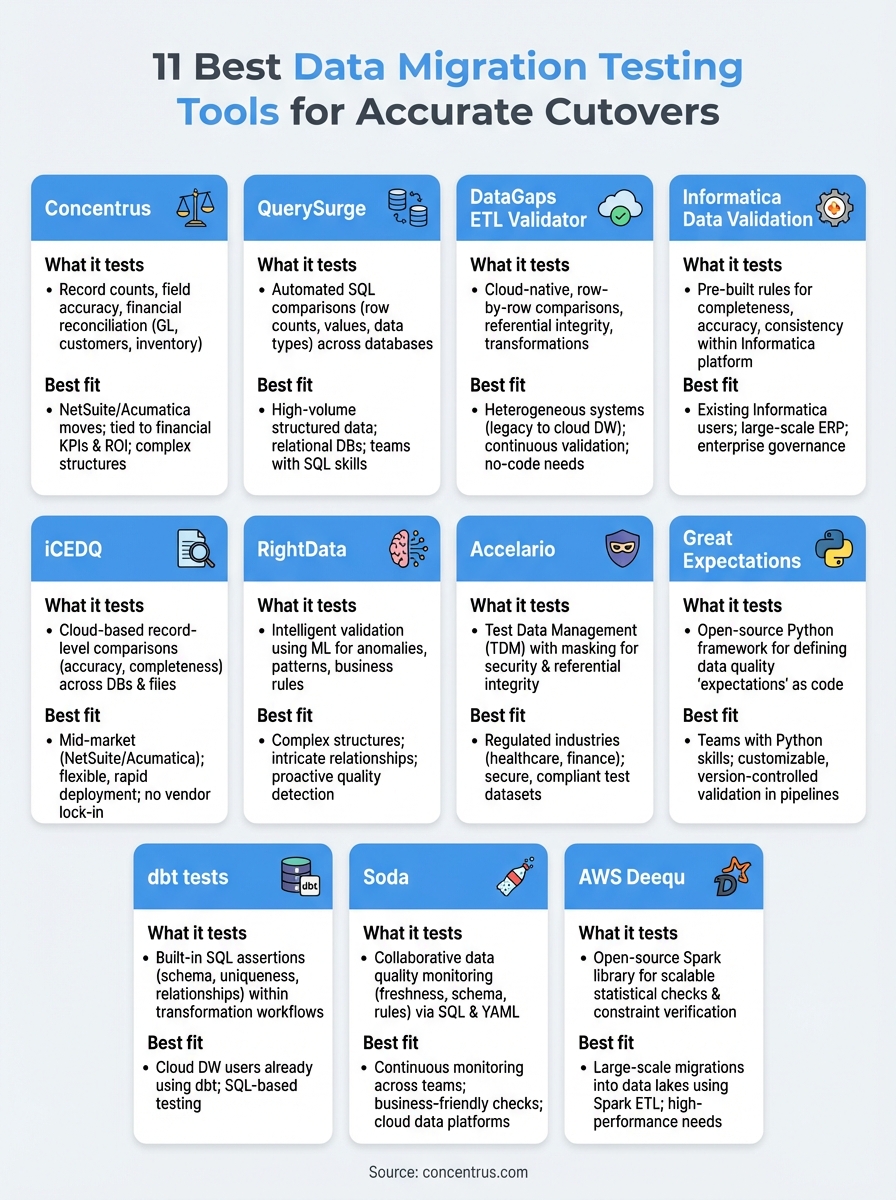

Data migration can make or break an ERP implementation. When you’re moving years of financial records, customer data, and operational history from one system to another, accuracy isn’t optional, it’s everything. The right data migration testing tools help you catch errors before they become costly problems, validating that every record transfers correctly and completely.

At Concentrus, we’ve seen what happens when data migration goes wrong. Failed cutovers delay go-lives, frustrate teams, and erode confidence in new ERP investments. For CFOs and finance leaders at midsized companies, the stakes are particularly high, your financial close, reporting accuracy, and operational continuity all depend on clean, validated data from day one.

This guide covers 11 data migration testing tools that can help you automate validation, detect discrepancies, and ensure data integrity throughout your migration process. Whether you’re implementing NetSuite, Acumatica, or another ERP platform, these tools offer the verification capabilities you need for a confident, accurate cutover.

1. Concentrus

Concentrus offers end-to-end data migration validation through their ROI Roadmap™ methodology, combining expert-led testing with systematic verification processes tailored for NetSuite and Acumatica ERP implementations. Rather than providing standalone software, Concentrus delivers comprehensive migration testing services that integrate automated tools with hands-on financial and operational expertise to ensure your cutover meets specific ROI targets and financial accuracy standards.

What it tests and how it works

Concentrus validates record counts, field-level accuracy, and financial reconciliation across every phase of your migration. Their testing approach verifies that general ledger balances, customer records, vendor data, inventory levels, and transaction histories transfer correctly from your legacy system to your new ERP platform. You get automated data comparison scripts combined with manual verification by ERP specialists who understand both the technical requirements and the financial implications of data errors.

The methodology includes pre-migration data profiling to identify quality issues early, parallel testing during implementation, and post-migration reconciliation to confirm complete accuracy. Their team creates custom validation rules based on your specific chart of accounts, business processes, and reporting requirements, ensuring that every critical data element passes verification before go-live.

"Testing isn’t just about matching row counts. It’s about ensuring your financial close can happen on schedule with accurate numbers on day one."

Best fit and typical migration scenarios

You’ll benefit most from Concentrus if you’re moving to NetSuite or Acumatica and need migration testing tied directly to financial KPIs and business outcomes. Their services work particularly well for midsized companies with complex data structures, multiple subsidiaries, or strict compliance requirements where migration errors could impact financial reporting or audit results.

Typical scenarios include full ERP replacements where you’re migrating decades of historical data, ERP rescue situations where previous migration attempts failed, and phased rollouts requiring validated data cutover for each business unit. Concentrus handles migrations involving multi-currency transactions, intercompany eliminations, and industry-specific data requirements across manufacturing, distribution, and professional services sectors.

Strengths and trade-offs

Concentrus brings deep NetSuite and Acumatica platform knowledge combined with financial expertise that most data migration testing tools lack. You get testing protocols designed around actual business outcomes like accurate financial closes, improved cash flow visibility, and reliable reporting rather than just technical data validation. Their team understands what CFOs need from migration testing and structures validation to prove ROI delivery.

The trade-off centers on service-based delivery rather than self-service software. You’ll work directly with consultants who manage testing protocols, which provides expert guidance but requires coordination with external resources. This approach costs more than licensing standalone testing software but delivers validation expertise specifically for ERP implementations where financial accuracy drives project success.

Pricing and licensing model

Concentrus structures project-based pricing tied to your migration scope, data complexity, and timeline requirements. You’ll receive a fixed-price proposal based on the number of records, data entities, validation rules, and testing cycles required for your specific NetSuite or Acumatica implementation. Their pricing includes all testing services, from initial data profiling through post-cutover validation, with no separate software licensing fees.

Project costs typically align with overall ERP implementation budgets, representing a fraction of your total investment while protecting against costly migration failures. Because pricing connects to guaranteed ROI outcomes, you can justify migration testing expenses against measurable financial benefits like faster month-end closes and reduced reconciliation time. Most midsized companies invest between $25,000 and $150,000 for complete migration testing services depending on data volume and complexity.

2. QuerySurge

QuerySurge delivers automated data validation for ERP migrations through SQL-based testing that compares source and target databases at scale. This platform focuses on database-level verification, running automated queries to validate record counts, data types, and field values across your entire migration without requiring manual spreadsheet comparisons. You can set up thousands of validation tests that run automatically, flagging discrepancies for review before your cutover date.

What it tests and how it works

QuerySurge executes paired SQL queries against both your legacy system and new ERP platform, comparing results to identify mismatches. The tool validates row counts, column values, data types, and aggregate calculations like sums and averages to ensure complete transfer accuracy. You define test cases through a visual interface that generates comparison queries automatically, then schedule these tests to run during migration cycles.

The platform supports cross-database validation, meaning you can compare data between different database types like Oracle to PostgreSQL or SQL Server to MySQL. QuerySurge stores test results in a central repository where you can track validation history, generate reports for stakeholders, and drill down into specific discrepancies to understand what failed and why.

"Automated query-based testing eliminates the manual effort of sample-checking records while providing comprehensive validation coverage across your entire data set."

Best fit and typical migration scenarios

QuerySurge works best when you’re migrating structured data between relational databases and need automated validation at volume. This tool fits ERP implementations where millions of records require verification and manual testing becomes impractical. Companies with technical resources who can write SQL queries or customize test cases will extract the most value from QuerySurge’s capabilities.

Strengths and trade-offs

The platform excels at high-volume automated testing and provides detailed mismatch reporting that helps you quickly identify data quality issues. QuerySurge handles complex validations like multi-table joins and aggregate comparisons that would take days to verify manually. However, you’ll need SQL knowledge to create sophisticated test cases, and the tool focuses primarily on database validation rather than business logic or workflow testing.

Pricing and licensing model

QuerySurge uses subscription-based licensing with pricing that scales based on the number of data sources and concurrent users. Most implementations require annual contracts starting around $50,000 for basic configurations, with costs increasing for larger environments or additional features. You’ll pay separately for professional services if you need help configuring tests or integrating QuerySurge with your specific ERP platforms.

3. DataGaps ETL Validator

DataGaps ETL Validator provides cloud-native data validation specifically built for ETL and migration testing across diverse database platforms. This tool automates row-by-row comparisons between source and target systems, validating data accuracy without requiring you to write custom scripts or manage complex testing infrastructure. You can deploy DataGaps directly from your browser, connecting to on-premise databases, cloud data warehouses, and hybrid environments through secure agents.

What it tests and how it works

DataGaps validates field-level accuracy, referential integrity, and data transformations by comparing source and target datasets automatically. The platform runs parallel validation tests across multiple tables simultaneously, checking for missing records, duplicate entries, and data type mismatches. You configure validation rules through a visual interface that requires no coding, defining which tables to compare and what tolerance levels to accept for numeric differences.

The tool generates real-time discrepancy reports that highlight exactly which records failed validation and why. DataGaps tracks changes over time, letting you retest after remediation to confirm fixes worked correctly. This approach helps you validate not just initial migration loads but also incremental updates during phased implementations.

"Visual configuration eliminates the technical barrier to comprehensive data validation, letting business analysts verify migration accuracy without SQL expertise."

Best fit and typical migration scenarios

DataGaps works well when you’re migrating between heterogeneous systems like moving from legacy databases to cloud data warehouses such as Snowflake or Redshift. The platform fits scenarios where you need continuous validation during iterative migration cycles rather than one-time testing. Teams without dedicated data engineers benefit from DataGaps’ no-code interface and pre-built validation templates.

Strengths and trade-offs

The platform delivers browser-based accessibility with minimal infrastructure requirements, letting you start validation testing quickly without extensive setup. DataGaps supports wide database compatibility, connecting to both traditional and modern data platforms through standard protocols. However, the tool focuses primarily on data comparison rather than testing complex business logic or application workflows, which limits its usefulness for validating transactional behavior in your new ERP system.

Pricing and licensing model

DataGaps offers subscription licensing based on the number of data sources you need to validate and your total data volume. Pricing typically starts around $20,000 annually for small deployments with 2-3 database connections, scaling up based on complexity and concurrent validation jobs. You can purchase additional capacity as needed, paying only for the resources your migration requires.

4. Informatica Data Validation

Informatica Data Validation provides enterprise-grade testing capabilities integrated directly into Informatica’s broader data integration platform. This tool automates comparison testing between source and target systems, validating data accuracy through pre-built rules and custom validation logic. You can leverage Informatica’s existing connectors and transformation engine to validate data during migration while maintaining centralized test management across your entire data ecosystem.

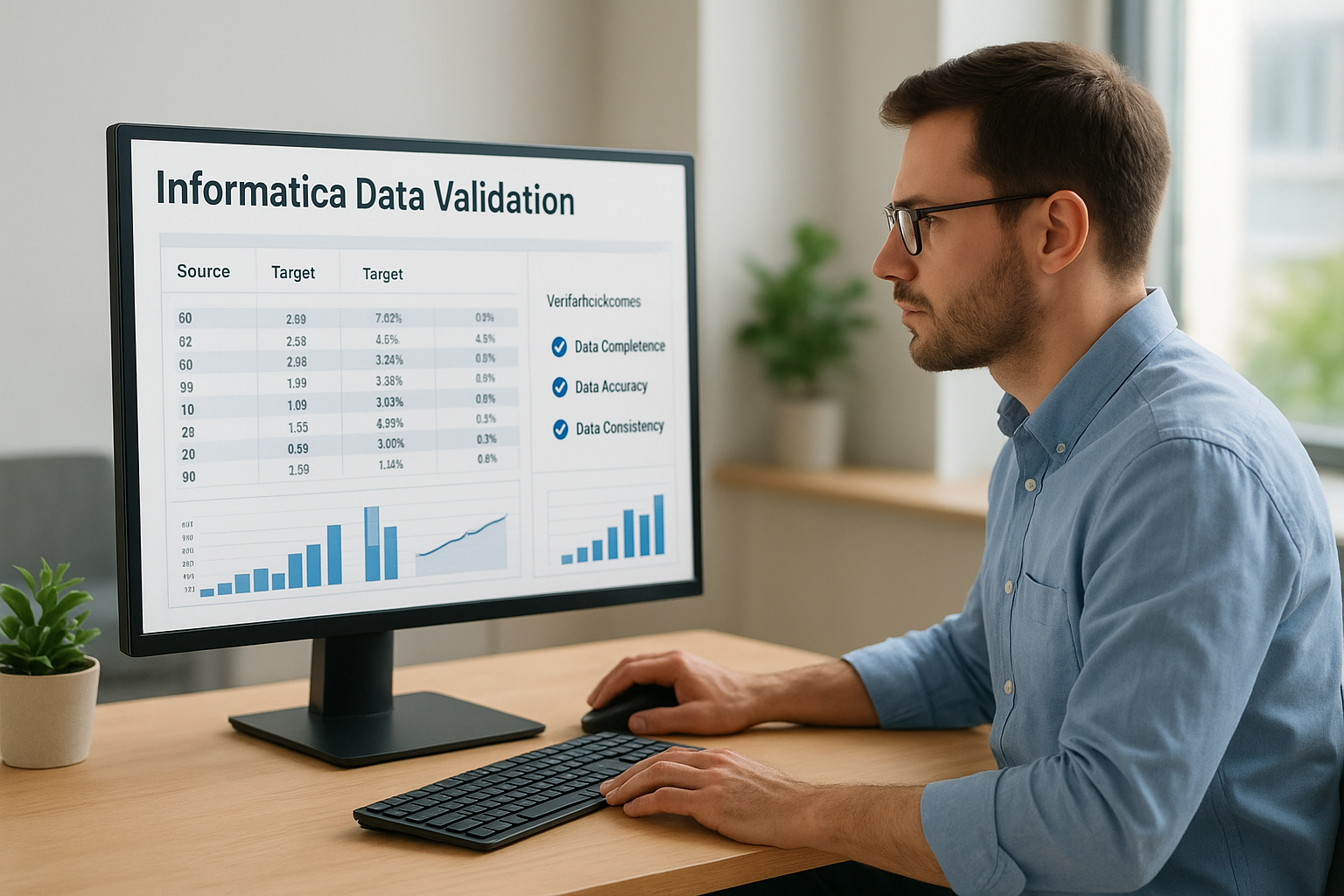

What it tests and how it works

Informatica validates data completeness, accuracy, and consistency by executing automated test cases against both source and target databases. The platform runs column-level comparisons, aggregate calculations, and referential integrity checks to ensure your migration maintains data quality standards. You configure validation rules through Informatica’s design interface, defining acceptable variance thresholds and business logic that must pass before cutover approval.

The tool integrates with Informatica PowerCenter or Cloud Data Integration, letting you reuse existing transformation logic for validation testing. Informatica tracks test results in a centralized repository where you can generate compliance reports, monitor validation progress, and document test coverage for audit purposes.

"Integration with your existing ETL infrastructure eliminates duplicate effort and ensures validation logic matches your actual migration transformations."

Best fit and typical migration scenarios

Informatica fits best when you’re already using Informatica products for data integration and want unified tooling across migration and validation activities. This platform works well for large-scale ERP implementations where governance requirements demand documented testing and multiple stakeholders need visibility into validation results. Organizations with complex data transformation requirements benefit from reusing PowerCenter mappings as validation logic.

Strengths and trade-offs

The platform delivers deep integration with Informatica’s ecosystem, providing seamless workflows between data migration and testing activities. Informatica offers enterprise-level governance features including audit trails, role-based access controls, and comprehensive reporting capabilities. However, you’ll face significant licensing costs if you’re not already an Informatica customer, and the platform requires specialized Informatica expertise to configure and operate effectively compared to simpler data migration testing tools.

Pricing and licensing model

Informatica uses enterprise licensing with pricing based on the number of connections, data volume, and additional features required. You’ll typically purchase validation capabilities as part of broader Informatica platform subscriptions, with annual costs ranging from $100,000 to $500,000+ depending on your environment’s scale and complexity. Expect additional professional services fees for implementation and configuration support.

5. iCEDQ

iCEDQ offers cloud-based data validation designed specifically for ETL testing and migration projects across relational databases, data warehouses, and file-based systems. This platform automates record-level comparisons between source and target environments, providing visual dashboards that track validation progress and highlight discrepancies in real time. You can deploy iCEDQ quickly through its web interface, connecting to your data sources without installing agent software or managing complex infrastructure.

What it tests and how it works

iCEDQ validates data accuracy, completeness, and transformation logic by executing automated comparison rules against both source and target systems. The platform runs column-by-column validation checks, verifying that data types match, null values transfer correctly, and numeric calculations produce expected results. You configure test cases through a browser-based interface that lets you define matching keys, set tolerance thresholds for numeric variances, and establish validation sequences for dependent tables.

The tool performs batch and incremental validation, letting you test full migration loads initially and then validate daily delta changes during cutover periods. iCEDQ generates exception reports that show exactly which records failed validation, displaying side-by-side comparisons of source and target values to speed remediation. You can rerun tests automatically after fixes to confirm data quality improvements.

"Browser-based deployment reduces IT overhead while providing validation capabilities that scale from small migrations to enterprise-wide ERP cutovers."

Best fit and typical migration scenarios

iCEDQ works best when you need flexible validation across multiple database types and want to avoid vendor lock-in to specific data migration testing tools. This platform fits mid-market companies implementing cloud ERPs like NetSuite or Acumatica who need comprehensive testing without the complexity of enterprise-grade solutions. Teams with basic SQL knowledge can configure validation rules effectively, though the platform doesn’t require coding for standard comparison tests.

Strengths and trade-offs

The platform delivers rapid deployment with minimal technical setup, letting you start validation testing within days rather than weeks. iCEDQ supports diverse data sources including flat files, APIs, and modern cloud data platforms alongside traditional databases. However, the tool focuses on data comparison rather than testing business processes or application workflows, which means you’ll need separate approaches to validate transactional behavior in your new ERP system.

Pricing and licensing model

iCEDQ uses subscription pricing based on the number of data sources and validation volume you require. Annual contracts typically start around $30,000 for small implementations with 3-5 database connections, scaling up based on complexity and testing frequency. You can purchase additional capacity or upgrade tiers as your migration scope expands, providing flexibility to match licensing costs with project timelines.

6. RightData

RightData delivers intelligent data testing through automated validation rules that adapt to your data patterns and business logic requirements. This platform combines machine learning capabilities with traditional comparison testing to identify anomalies, validate transformations, and ensure data quality throughout your migration process. You can configure RightData to monitor data consistency across multiple systems simultaneously, providing continuous validation during phased ERP implementations.

What it tests and how it works

RightData validates data accuracy, referential integrity, and business rule compliance by executing automated test scenarios against your source and target environments. The platform performs record-level comparisons, aggregate validations, and pattern analysis to detect inconsistencies that standard data migration testing tools might miss. You define validation logic through a visual interface that lets you specify matching keys, set acceptable variance ranges, and establish dependencies between related tables.

The tool uses adaptive testing algorithms that learn from your data patterns over time, automatically suggesting validation rules based on observed relationships and constraints. RightData generates detailed exception reports showing exactly where discrepancies occur, with drill-down capabilities that let you investigate specific records and understand root causes quickly.

"Intelligent validation reduces false positives by understanding your data’s natural patterns and focusing attention on genuine quality issues that impact business outcomes."

Best fit and typical migration scenarios

RightData works best when you’re migrating complex data structures with intricate relationships and need validation that goes beyond simple field comparisons. This platform fits scenarios where data quality issues require sophisticated detection logic and you want testing that improves as it learns your data patterns. Teams implementing cloud-based ERPs benefit from RightData’s ability to validate data across hybrid environments during transition periods.

Strengths and trade-offs

The platform delivers adaptive testing capabilities that reduce manual configuration effort and improve detection accuracy over time. RightData handles complex validation scenarios including multi-table joins and calculated field verification without requiring extensive custom scripting. However, the machine learning features require initial training periods to establish baseline patterns, which means you’ll see optimal performance after running multiple validation cycles rather than immediately during first deployment.

Pricing and licensing model

RightData uses subscription licensing with pricing based on data volume and the number of validation rules you deploy. Annual contracts typically start around $40,000 for standard implementations, with costs increasing based on environment complexity and required testing frequency. You can scale licensing up or down based on project phases, paying for peak capacity during active migration periods and reducing costs during maintenance phases.

7. Accelario

Accelario provides test data management with integrated validation capabilities designed for large-scale ERP migrations where data security and compliance matter alongside accuracy. This platform combines data masking, subsetting, and validation into a unified solution that lets you create secure test environments while verifying migration quality. You can generate production-like datasets for testing without exposing sensitive information, then validate that masked and migrated data maintains referential integrity and business logic requirements.

What it tests and how it works

Accelario validates referential integrity, data relationships, and business rule compliance across your migration while simultaneously creating secure test datasets. The platform performs automated relationship discovery to map dependencies between tables, then validates that these relationships remain intact after data transformations and masking operations. You configure validation rules that check for orphaned records, broken foreign keys, and constraint violations that could cause system failures post-cutover.

The tool generates subset databases that maintain referential integrity while reducing data volumes for faster testing cycles. Accelario validates both the accuracy of data transfers and the effectiveness of your masking rules, ensuring that sensitive information gets properly anonymized without breaking application functionality.

"Combined data masking and validation lets you test migration accuracy in secure environments that protect customer privacy while maintaining realistic data patterns."

Best fit and typical migration scenarios

Accelario works best when you’re migrating regulated data in industries like healthcare, finance, or retail where compliance requirements prohibit testing with production information. This platform fits large-scale implementations where you need multiple test environments with different data subsets for parallel testing. Organizations concerned about data privacy benefit from Accelario’s ability to validate migrations using masked datasets that eliminate security risks.

Strengths and trade-offs

The platform delivers comprehensive test data management alongside validation, providing capabilities that most standalone data migration testing tools lack. Accelario excels at maintaining referential integrity when creating subsets, which proves crucial for testing complex ERP workflows accurately. However, the platform requires significant configuration effort to set up masking rules and relationship mappings initially, and licensing costs reflect its enterprise-grade feature set rather than basic comparison testing needs.

Pricing and licensing model

Accelario uses enterprise licensing with pricing based on database size, number of environments, and required features. Annual contracts typically start around $80,000 for standard deployments, scaling up based on data volume and complexity. You’ll receive both validation and test data management capabilities in the license, which provides value if you need comprehensive testing infrastructure beyond basic migration verification.

8. Great Expectations

Great Expectations delivers open-source data validation through a Python-based framework that lets you define, document, and test data quality expectations programmatically. This platform transforms how you validate migrations by treating data quality as code, allowing you to version control your validation rules alongside your ERP implementation. You can build comprehensive test suites that verify data accuracy, completeness, and consistency without paying licensing fees or depending on proprietary software.

What it tests and how it works

Great Expectations validates data quality rules that you define as "expectations" in Python code or JSON configuration files. The framework checks null values, data types, value ranges, uniqueness constraints, and custom business logic against your migrated datasets. You create expectation suites that describe what your data should look like, then run these tests against both source and target databases to identify discrepancies.

The platform generates data documentation automatically from your expectations, creating human-readable reports that explain what you tested and what passed or failed. Great Expectations integrates with data sources through SQL databases, Pandas dataframes, and Spark, letting you validate data wherever it lives. You can schedule automated validation runs that execute during migration cycles and send alerts when tests fail.

"Treating validation rules as code provides version control, collaboration capabilities, and reproducibility that spreadsheet-based testing approaches cannot match."

Best fit and typical migration scenarios

Great Expectations works best when you have Python development capabilities on your team and want customizable data migration testing tools that adapt to complex validation requirements. This framework fits scenarios where you need continuous validation integrated into automated migration pipelines rather than manual testing. Organizations implementing modern cloud ERPs with API-driven data loads benefit from Great Expectations’ ability to validate data at multiple pipeline stages.

Strengths and trade-offs

The platform delivers complete customization since you control validation logic through code and can extend functionality with custom expectations. Great Expectations provides no-cost licensing and active community support, eliminating budget constraints for comprehensive testing. However, you’ll need Python expertise to implement and maintain validation suites, and the framework requires more setup effort than commercial tools with graphical interfaces.

Pricing and licensing model

Great Expectations uses open-source licensing with no software costs for the core framework. You’ll invest engineering time to build and maintain validation suites rather than paying subscription fees. The project offers Great Expectations Cloud as a paid hosting option with additional collaboration features starting around $20,000 annually, though most teams run the open-source version on their own infrastructure at no cost beyond standard compute expenses.

9. dbt tests

dbt tests provides built-in data validation through SQL-based assertions that run directly against your data warehouse during transformation workflows. This open-source framework lets you define quality checks as code, validating data accuracy and integrity within the same tool you use for data modeling and transformations. You can integrate validation testing seamlessly into your ERP migration pipeline, ensuring data quality gates prevent bad data from reaching production.

What it tests and how it works

dbt validates schema compliance, uniqueness constraints, referential integrity, and custom business logic through four test types: unique, not_null, accepted_values, and relationships. The framework runs SQL queries against your target database to verify that your data meets defined expectations after migration loads. You define tests in YAML configuration files alongside your data models, specifying which columns must be unique, which cannot contain nulls, and what relationships must exist between tables.

The platform executes automated test suites every time you run transformations, catching data quality issues immediately rather than discovering problems after cutover. dbt generates test documentation automatically and integrates with orchestration tools like Airflow to schedule validation runs during migration cycles. You can write custom tests using SQL to validate complex business rules specific to your ERP requirements.

"Integrating validation directly into your transformation pipeline ensures that data quality checks run consistently without requiring separate data migration testing tools or manual verification steps."

Best fit and typical migration scenarios

dbt tests work best when you’re using data warehouses like Snowflake, BigQuery, Redshift, or Databricks as your target environment and already running dbt for transformations. This approach fits cloud ERP implementations where you build transformation layers on top of raw migrated data. Teams with SQL expertise benefit from dbt’s code-based testing model, which provides version control and collaboration capabilities similar to software development workflows.

Strengths and trade-offs

The framework delivers native integration with modern data stacks, eliminating the need for separate validation infrastructure when you already use dbt. Testing with SQL provides complete flexibility to define any validation logic your database supports. However, dbt focuses on warehouse-based testing rather than comparing source and target systems directly, which means you’ll need additional approaches to validate initial data loads before transformation layers run.

Pricing and licensing model

dbt Core uses open-source licensing with no software costs for the testing framework. You’ll invest engineering time building test definitions rather than paying subscription fees. dbt Cloud offers hosted services starting around $100 per user monthly for team plans, providing scheduling, documentation hosting, and collaboration features beyond the core open-source capabilities.

10. Soda

Soda delivers data quality monitoring through automated checks that validate data accuracy, completeness, and consistency across your entire data pipeline. This platform combines SQL-based validation with collaborative workflows that let technical and business teams define quality expectations together, bridging the gap between data engineering and business requirements. You can deploy Soda to monitor data quality continuously during migration cycles, catching issues before they impact your ERP cutover.

What it tests and how it works

Soda validates data freshness, volume anomalies, schema compliance, and custom business rules by executing checks against your databases, data warehouses, and lakes. The platform runs SQL queries automatically on defined schedules, measuring metrics like row counts, null percentages, duplicate records, and value distributions. You define checks using Soda’s YAML-based configuration language that requires minimal SQL knowledge, specifying what constitutes acceptable data quality thresholds for your migration.

The tool integrates with orchestration platforms like Airflow and Prefect, letting you embed validation gates directly into your migration workflows. Soda sends alerts through Slack, email, or monitoring dashboards when checks fail, providing immediate notification of data quality issues. You can track quality metrics over time to identify trends and verify that remediation efforts improve data accuracy consistently.

"Collaborative validation workflows ensure that business users and data engineers agree on quality expectations before migration begins, reducing post-cutover surprises."

Best fit and typical migration scenarios

Soda works best when you need continuous data quality monitoring throughout phased ERP implementations rather than one-time validation testing. This platform fits scenarios where multiple teams need visibility into data quality metrics and you want validation checks that run automatically without manual intervention. Organizations migrating to cloud data warehouses benefit from Soda’s native support for Snowflake, BigQuery, and Redshift alongside traditional databases.

Strengths and trade-offs

The platform delivers business-friendly check definitions that don’t require deep SQL expertise, making data migration testing tools accessible to finance and operations teams. Soda provides continuous monitoring that extends beyond initial migration validation, giving you ongoing data quality assurance after cutover. However, the tool focuses on quality metrics and anomaly detection rather than detailed record-by-record comparisons, which means you’ll need supplementary approaches for field-level validation during initial data loads.

Pricing and licensing model

Soda offers freemium licensing with a free tier supporting basic checks and limited data sources. Paid plans start around $1,000 monthly for teams requiring advanced features like incident management and unlimited data sources. You can scale licensing based on the number of data sources monitored and validation checks executed, providing flexibility to match costs with migration timelines and ongoing quality monitoring needs.

11. AWS Deequ

AWS Deequ provides open-source data quality validation built specifically for Apache Spark environments, letting you define and execute data checks at scale within your big data processing pipelines. This Scala-based library integrates directly with Spark DataFrames, enabling you to validate millions of records efficiently during migration loads without moving data outside your processing environment. You can deploy Deequ on Amazon EMR, Databricks, or any Spark cluster to validate data quality as part of your ERP migration workflows.

What it tests and how it works

Deequ validates completeness, correctness, and consistency by running statistical checks and constraint verifications against your Spark datasets. The library performs column-level profiling that automatically generates suggestions for data quality rules based on observed patterns, then executes these rules as unit tests during migration processing. You define constraints programmatically in Scala or Python, specifying requirements like "customer_id must be unique" or "order_amount must be positive" that Deequ verifies across your entire dataset.

The framework calculates quality metrics incrementally as data flows through your Spark jobs, storing results for historical tracking and trend analysis. Deequ uses Apache Spark’s distributed computing capabilities to validate massive datasets quickly, processing validation checks in parallel across your cluster nodes. You can integrate verification results with monitoring systems to trigger alerts when quality thresholds fail during migration cycles.

"Native Spark integration eliminates data movement overhead while providing validation scalability that matches your migration processing capacity."

Best fit and typical migration scenarios

Deequ works best when you’re migrating large datasets into cloud data lakes or warehouses using Spark-based ETL pipelines. This library fits scenarios where you process millions or billions of records and need validation that scales without performance bottlenecks. Teams already running Spark for data transformation benefit from adding Deequ checks without introducing separate data migration testing tools or validation infrastructure.

Strengths and trade-offs

The library delivers exceptional performance for large-scale validation through native Spark optimization and parallel processing capabilities. Deequ provides automatic metric suggestions that reduce initial setup effort by analyzing your data patterns and recommending relevant quality checks. However, you’ll need Spark expertise and Scala or Python development skills to implement validation logic effectively, and the tool requires Spark infrastructure rather than working with traditional databases directly.

Pricing and licensing model

Deequ uses Apache 2.0 open-source licensing with no software costs for the validation library itself. You’ll pay for compute resources on your Spark cluster based on AWS EMR, Databricks, or self-managed infrastructure pricing. Validation costs depend on your data volume and cluster configuration, typically ranging from hundreds to thousands of dollars monthly for production-scale implementations processing large migration datasets continuously.

Next steps for a clean cutover

Selecting the right data migration testing tools represents only the first step toward a successful ERP cutover. You need structured validation protocols that combine automated testing with expert verification of your financial and operational data. The tools in this guide provide the technical capabilities, but successful migrations require strategic planning that ties validation checkpoints to your specific business outcomes and ROI targets.

Your cutover accuracy depends on testing that catches errors early, validates transformations thoroughly, and confirms that every critical data element transfers correctly. Start by defining your validation requirements based on financial reporting needs, compliance obligations, and operational workflows. Then select tools that match your technical environment and data complexity while providing the verification depth your CFO needs to approve go-live with confidence.

Schedule a migration validation consultation with Concentrus to ensure your NetSuite or Acumatica implementation delivers accurate data from day one with guaranteed ROI outcomes.